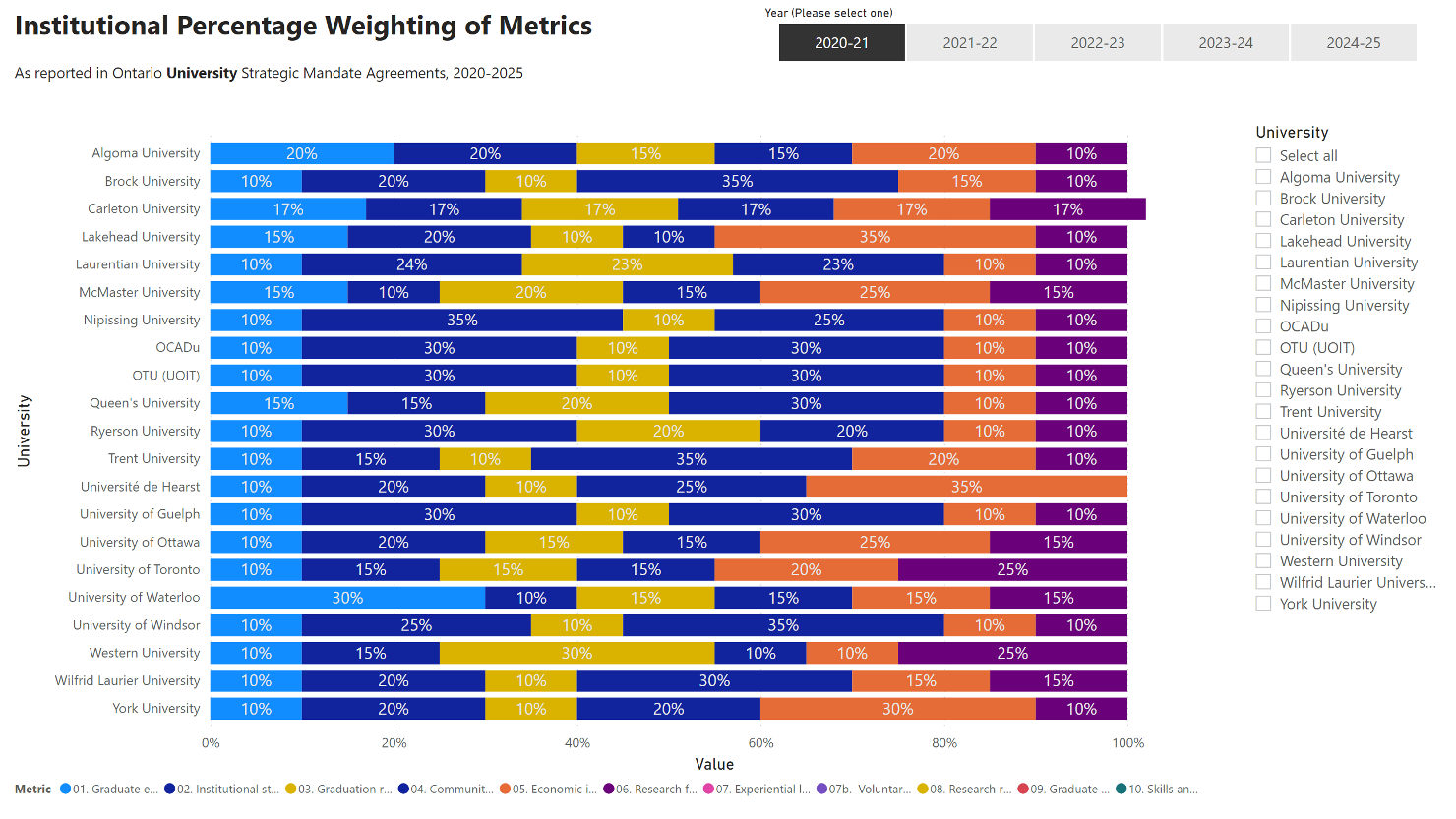

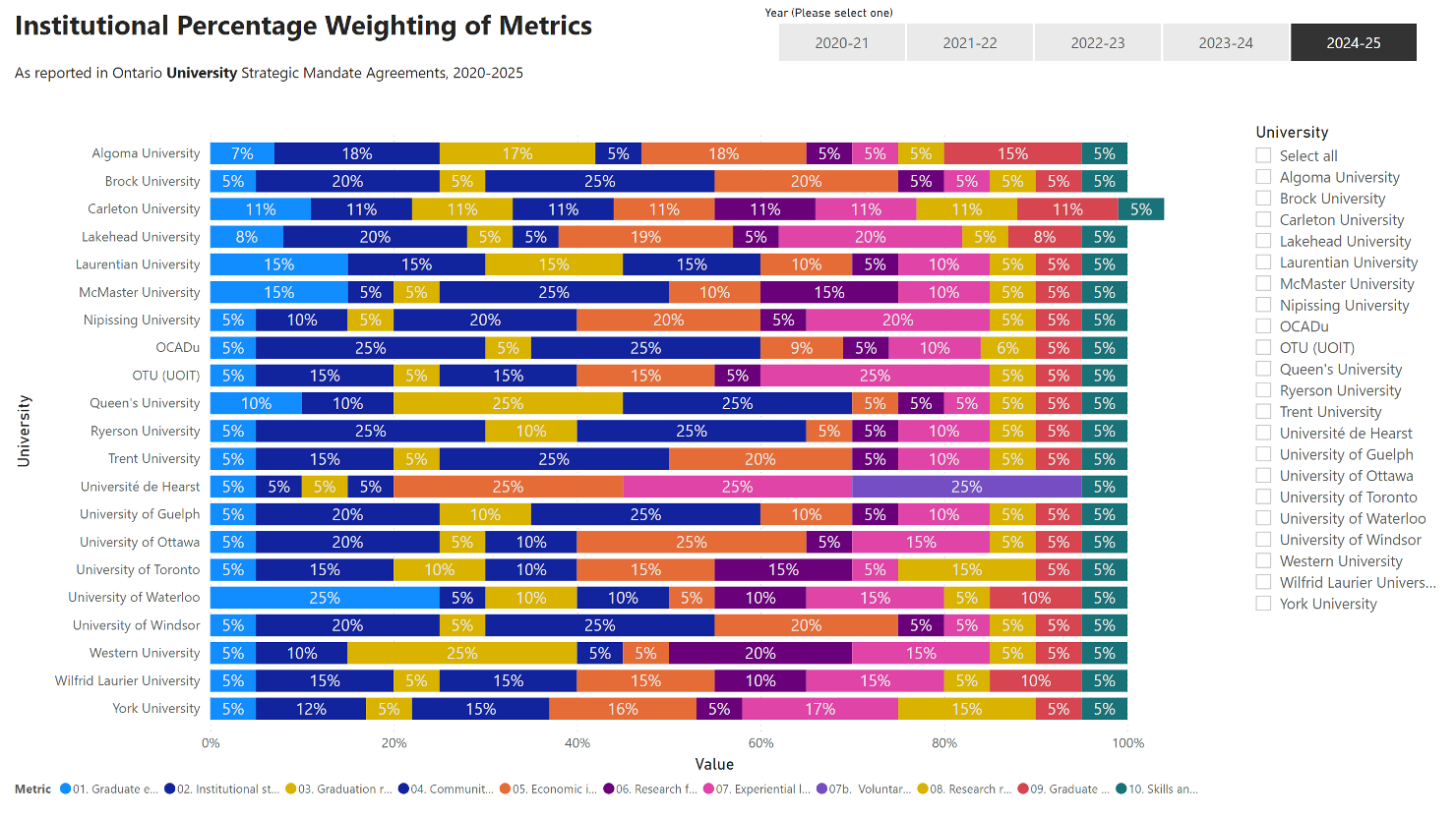

As initially introduced in the SMA3 template, each institution SMA3 includes a paragraph in the skills and job outcomes section that gives some preliminary information about the skills and competencies metric:

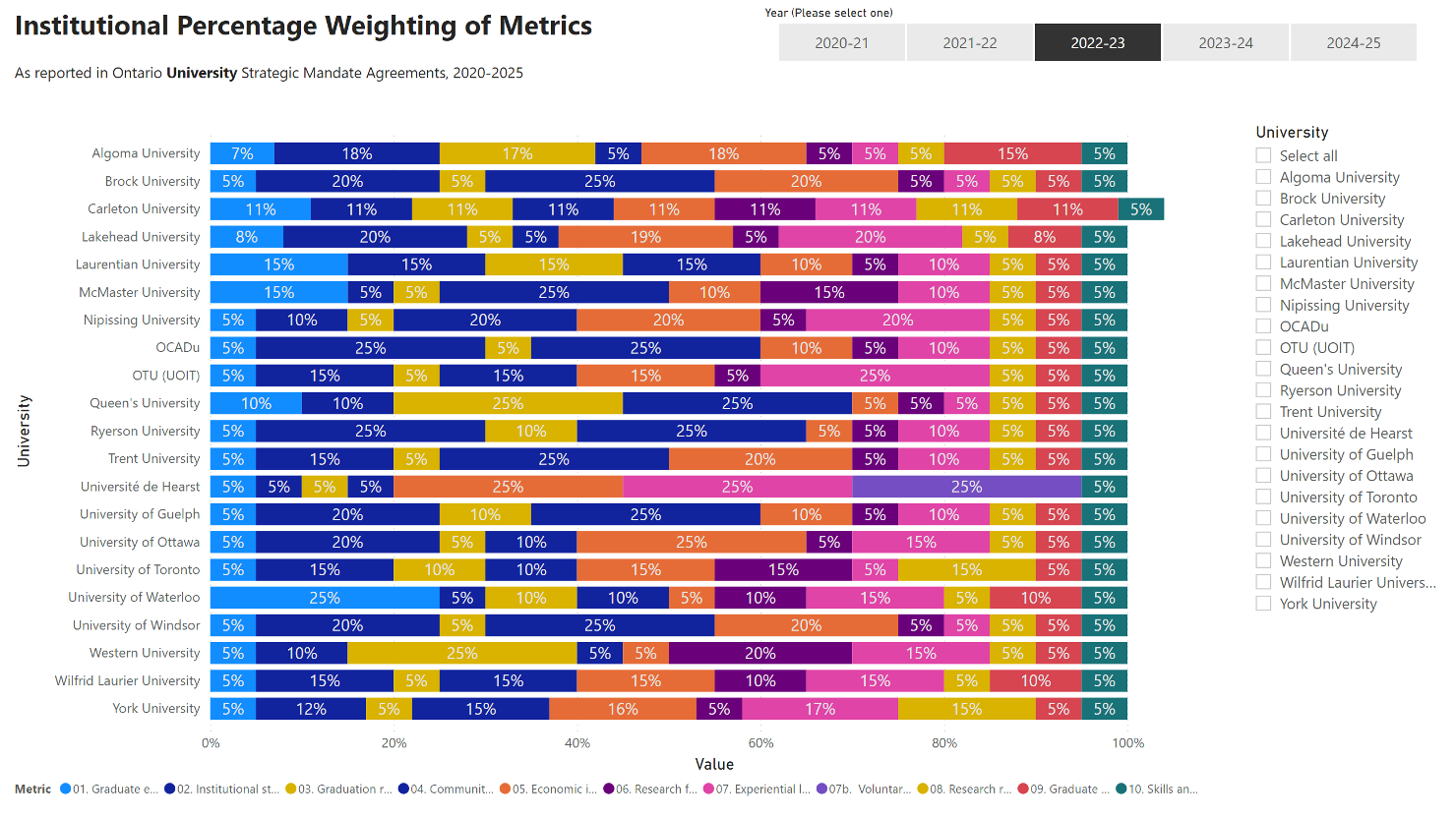

For the skills and competencies metric being initiated for performance-based funding in 2022–23, the Ministry of Colleges and Universities will apply a ‘participation weighting’ of five % of annual performance-based funding notional allocations for all institutions. Institutional targets will not be set for this metric in SMA3. Participation will be validated and included as part of the SMA3 Annual Evaluation process for performance-based funding.

(MCTU, 2020, as cited in Ryerson University, 2020, p .7)

The metric is to be a random sample of all undergraduate students and the metric for all institutions will be weighted at 5% starting in the year 2022–23 for participation and public posting of results. The ministry is exploring the administration of the Education and Skills Online assessment tool and will provide more details on the process once they are available. (MCTU, 2020, as cited in Ryerson University, 2020, p .9)

The SMA3 documents’ description of the metric identifies that the source will be the “Education and Skills Online Assessment, Organisation for Economic Co-operation and Development (OECD)” (Algoma University & MCU, 2021, p. 12).

The Skills and competencies metric is uniformly 5% of the funding awarded for institutions’ participation by a random sample of all undergraduate students, in the OECD’s Education & Skills Online (ESO) assessment. This online version of the OECD’s Survey of Adult Skills is an assessment that is part of the Program for the International Assessment of Adult Competencies (PIAAC) which is delivered in over 40 countries.

The Education & Skills Online (ESO)

The ESO consists of two components.

The first is the Core Assessment Package which includes the Literacy and Numeracy sections as well as a Problem Solving in Technology-Rich Environments section. The Non-cognitive Assessment Package consists of the Skill Use, Career Interest and Intentionality, and Subjective Well-Being and Health sections. There is also a small remedial section (the Reading Components subtest) for test-takers who score low on the initial sections of the Core Assessment Package.

The ESO is an adaptive assessment tool, becoming progressively easier or more difficult depending on the test-taker’s performance. The ESO is expected to take 120 minutes to complete, but it does not need to be completed in one sitting and does not require proctors. Test-takers are given their score immediately upon completion. (Organisation for Economic Co-operation and Development, 2018)

The OECD’s Programme for the International Assessment of Adult Competencies (PIAAC) and the preceding Programme for the International Assessment of Student Assessment (PI) have been seen by some social scientists as an attempt to turn education into “calculable” and measurable problems. Drawing on critical education research literature, Tsatsaroni and Evans (2014) argued that these types of assessments stratify forms of knowledge (practical and “relevant” vs. academic and disciplinary), create negative connotations of national poor performance, and contribute to the social reproduction of existing divisions and inequalities.

No other established PBF program uses this metric

No other established PBF program uses this metric, but the ESO has been administered across Canada and by many OECD member countries. There is only one instance of the ESO being associated with PSE in Canada as of 2018, as described by Weingarten et al. (2018).

There is no indication about how this assessment will be administered as part of SMA3 beyond the random sample, but the procedural information on the OCED website gives insight into the mechanics, as does Weingarten et al’s HEQO’s 2018 paper and Essential Adult Skills Initiative (EASI).

Costs

The OECD methodology indicates that test-takers are to be given assessment codes that an institution or organization purchases in advance and distributes to them.

The two assessment components of the ESO, are sold by OECD as the Core Assessment Package, the Noncognitive Assessment Package, and a Bundled Core and Noncognitive Assessment Package. When purchasing fewer than 5,000, the Core Assessment Packagecosts €9.00, the Noncognitive Assessment Packagecosts €2.00, and the bundled version is €11.00. For 5,000 to 10,000 assessments bundled, the price is €10.25; up to 25,000 it is €9.75; and the ultimate tier is 150,000 or more bundled assessments for €7.00. At the time of writing, it takes CAD $1.55 to purchase one Euro. Ontario graduated 241,112 students from college and universities in 2018 (Statistics Canada, 2020).

Scenario 1: Delivering this assessment to all Ontario graduates would cost around $250,000 for the bundled assessment.

How?

It seems unlikely that a random sample would be all graduating PSE students.

Likely a representative, or simply motivated, subset of graduates will complete the assessment.

There is no indication of how assessment codes will be distributed, how many and by whom, or if MCU or the institutions themselves will cover the costs of the assessments. As the institutions themselves have the strongest ongoing relationship with students the most likely method of delivery would be institutions contacting graduates and delivering them their code to take the assessment. Under this model, MCU could use the 5% funding as an incentive to complete this task for a target percentage of students, but this is speculation.

Scenario 2: The methodology used by HEQCO, as Weingarten et al. (2018) described in Measuring Essential Skills of Postsecondary Students: Final Report of the Essential Adult Skills Initiative. Weingarten et al. (2019) also responded to the 2019 Ontario budget announcement of PBF for PSE with another paper, Postsecondary Education Metrics for the 21st Century.

Weingarten et al.’s 2018 paper described the Essential Adult Skills Initiative (EASI) project, which was a large-scale research project undertaken by HEQCO and 20 Ontario PSE partners (roughly a quarter of Ontario’s PSE system by participating institutions’ share of provincial enrolments).

How EASI was it?

The EASI project was designed to measure the literacy, numeracy, and problem-solving skills of incoming and graduating college and university students, with the intent to discover the degree to which students’ skills changed over their studies. EASI was deliberately run as an evaluation of the feasibility of administering ESO-style assessments on a large scale. Colleges and universities choose which undergraduate programs students were invited to participate and incentives were offered for completing in the form of gift cards.

The EASI project had acceptable participation and results were proximal to PIAAC 2012 comparators and the findings that too many graduating students demonstrated below-average skills deserves further investigation. The logistical implications were also discussed in the paper: “the EASI model succeeded in simplifying the logistics of administering large-scale assessments, we must note that institutions still contributed a considerable amount of resources, primarily in the form of staff time spent on the project” (Weingarten et al., 2019, p. 70).

The paper concluded that further testing will require either more streamlining of logistics or funds to recognize the burden placed on institutions that administer the ESO. The paper made no mention of the cost of the EASI project, but the 2,483 ESO assessments delivered to college students and 2,147 university students would have cost no less than $48,000 (list price). There is also the cost of incentives and coordination. These are not large costs in the context of the $6.5 billion provincial budget allocation to PSE (Government of Ontario, 2020), but the information collected should justify its cost and it should be clear where the cost will be borne.

These two scenarios suggest that completion of the task would be sufficient for funding, which matches the signals sent in the default weighting of 5% and the lack of implementation details.

What if it was an actual pre/post-intervention measure?

Scenario 3: The gap between entry and graduate results in the EASI project’s implementation of the PIAAC could be turned into a performance measure that could have targets set like other metrics. This would be a huge shift in how student success is valued and how a bachelor’s degree is valued.

The OECD’s PIAAC is not a measure of a university or university education itself, but a relatively well-validated measure of skills related to an individual’s ability to operate in modern society. Defining the PIAAC as a measure of PSE, tied to funding, would be a repurposing of the PIAAC that would invalidate the carefully constructed justification and literature it currently operates with.

This is a bad idea. This is not a performance metric.

The OECD ESO is not a performance metric. It’s not going to turn into one in 2022-23.

Implementation of the Skill and Competencies Metric

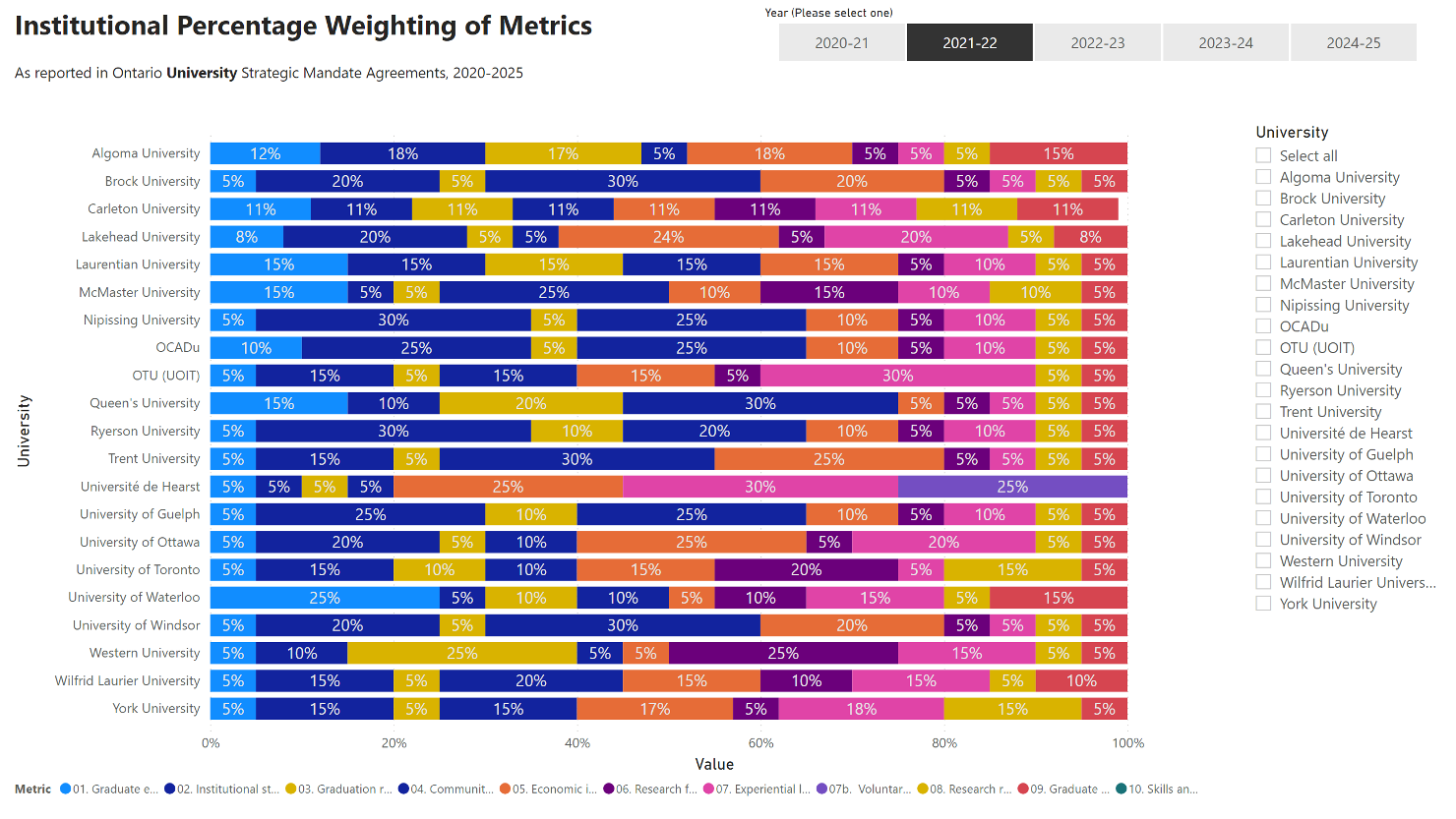

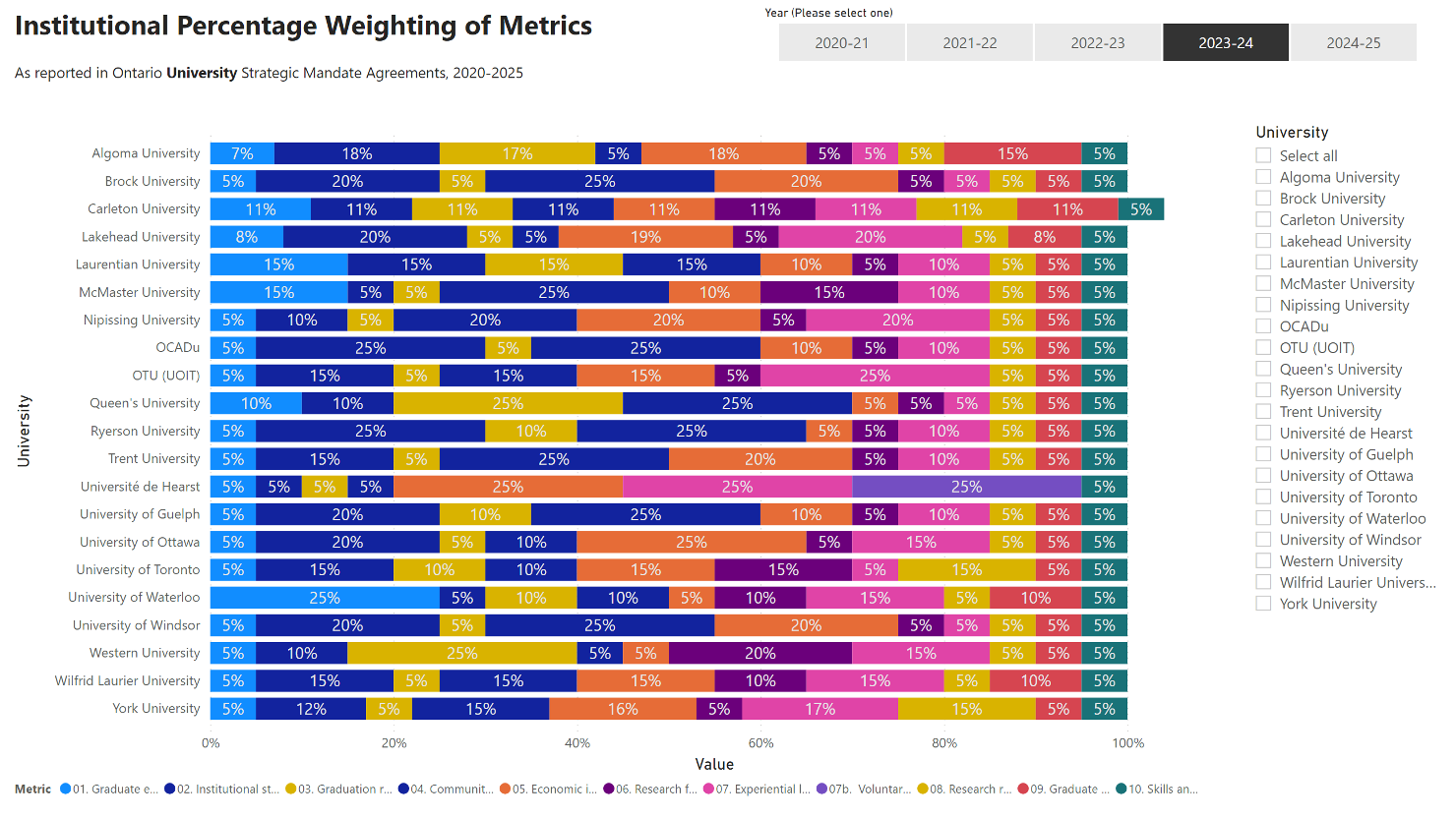

Across the three years of SMA3 from 2022-23 that the Skill and Competencies metric is active the weight given is mandated at 5%. Institutional narratives on this metric are the shortest of all 10 metrics and simply confirm that the institutional will participate.

This fixed amount has had a curious impact on Carleton University’s SMA3 metric weighting. Carleton’s strategy is equal weightings across all metrics, starting with 17% in the first year for all metrics and 11% in the subsequent years. The mandated 5% of the skill and competencies metric and Carleton’s other metrics 11% weights from 2022–23 to 2024–25 means that after the skills and competencies metric is initiated Carlton’s weights add up to 104%.

SMA3 Metric Skills and Competencies

| University | 2022-23 | 2023-24 | 2024-25 |

|---|---|---|---|

| Algoma University | 5% | 5% | 5% |

| Brock University | 5% | 5% | 5% |

| Carleton University | 5% | 5% | 5% |

| Lakehead University | 5% | 5% | 5% |

| Laurentian University | 5% | 5% | 5% |

| McMaster University | 5% | 5% | 5% |

| Nipissing University | 5% | 5% | 5% |

| OCADu | 5% | 5% | 5% |

| Queen's University | 5% | 5% | 5% |

| Ryerson University | 5% | 5% | 5% |

| Trent University | 5% | 5% | 5% |

| Université de Hearst | 5% | 5% | 5% |

| University of Guelph | 5% | 5% | 5% |

| University of Ottawa | 5% | 5% | 5% |

| University of Toronto | 5% | 5% | 5% |

| University of Waterloo | 5% | 5% | 5% |

| University of Windsor | 5% | 5% | 5% |

| OTU (UOIT) | 5% | 5% | 5% |

| Western University | 5% | 5% | 5% |

| Wilfrid Laurier University | 5% | 5% | 5% |

| York University | 5% | 5% | 5% |

Carleton’s 104%

In the original web version of Carleton’s web-based SMA3 the 2022-23, 2023-24, and 2024-25 weighting added up to 104%. A version is still available on the Internet Archive’s Wayback Machine.

I’m rooting for Carleton to receive 104% of their funding in 2022-23 – it’s a signed agreement after all.

At some point Carleton posted their own signed copy of SMA3 which adds up to 100%, and the Ontario.ca website was updated to have more precision in the numbers. Too bad, it was funny while it lasted.

Algoma University, and Ontario Ministry of Advanced Education and Skills Development. “2017-20 Strategic Mandate Agreement: Algoma University [PDF],” 2019. https://www.algomau.ca/wp-content/uploads/2019/01/Strategic-Mandate-Agreement.pdf.

Government of Canada, Statistics Canada. “Postsecondary Graduates, by Field of Study, Program Type, Credential Type, and Gender,” February 1, 2020. https://doi.org/10.25318/3710001201-eng.

Government of Ontario. “The Public Accounts of Ontario 2019-20 | Ontario.Ca,” September 23, 2020. https://www.ontario.ca/page/public-accounts-ontario-2019-20.

Office of the Provost & Vice President, Academic of Ryerson University. “SMA3: An Overview.” PDF. 2019 Consultation Document, 2019. https://www.ryerson.ca/content/dam/provost/PDFs/Overview-B-24oct2019final.pdf.

Ontario Ministry of Training, Colleges and Universities. “Ontario’s Postsecondary Education System Performance/Outcomes Based Funding – Technical Manual.” Ontario Ministry of Training, Colleges and Universities, September 2019. http://www.uwindsor.ca/strategic-mandate-agreement/sites/uwindsor.ca.strategic-mandate-agreement/files/performance_outcomes-based_funding_technical_manual_-_v1.0_-_final_september_419_en.pdf.

Organisation for Economic Co-operation and Development. “Education & Skills Online Technical Documentation.” Paris, 2018. https://www.oecd.org/skills/ESonline-assessment/assessmentdesign/technicaldocumentation/.

Tsatsaroni, Anna, and Jeff Evans. “Adult Numeracy and the Totally Pedagogised Society: PIAAC and Other International Surveys in the Context of Global Educational Policy on Lifelong Learning.” Educational Studies in Mathematics 87, no. 2 (2014): 167–86. https://doi.org/10.1007/s10649-013-9470-x.

Weingarten, Harvey P, Sarah Brumwell, Ken Chatoor, and Lauren Hudak. “Measuring Essential Skills of Postsecondary Students: Final Report of the Essential Adult Skills Initiative.” Toronto: Higher Education Quality Council of Ontario, 2018. https://heqco.ca/wp-content/uploads/2020/02/FIXED_English_Formatted_EASI-Final-Report2.pdf.

Weingarten, Harvey P., Martin Hicks, Amy Kaufman, Ken Chatoor, Emily MacKay, Jackie Pichette, and Higher Education Quality Council of Ontario. “Postsecondary Education Metrics for the 21st Century.” Toronto, May 3, 2019. http://www.heqco.ca/en-ca/Research/ResPub/Pages/Postsecondary-Education-Metrics-for-the-21st-Century-.aspx.